Content Moderation Platform

I designed a content moderation web application that utilizes AI and Machine Learning tools for a Moderation team to catch videos that violates my client's upload guidelines. I conducted interviews with the team and designed a POC utilizing AI tools based on their pain points. Working with a machine learning division, I brainstormed current and developing AI tools that could be used in the application. My final deliverable was an interactive prototype.

DEFINING THE PROBLEM

BACKGROUND

Over five billion videos are uploaded daily online, making it difficult for social media platforms to accurately moderate all videos. Anyone can share their daily lives, opinions, or activities that could potentially be used for malicious activity. It is the job of a content moderation team to catch anything that may go against the platform's guidelines or review videos that have been flagged by users.

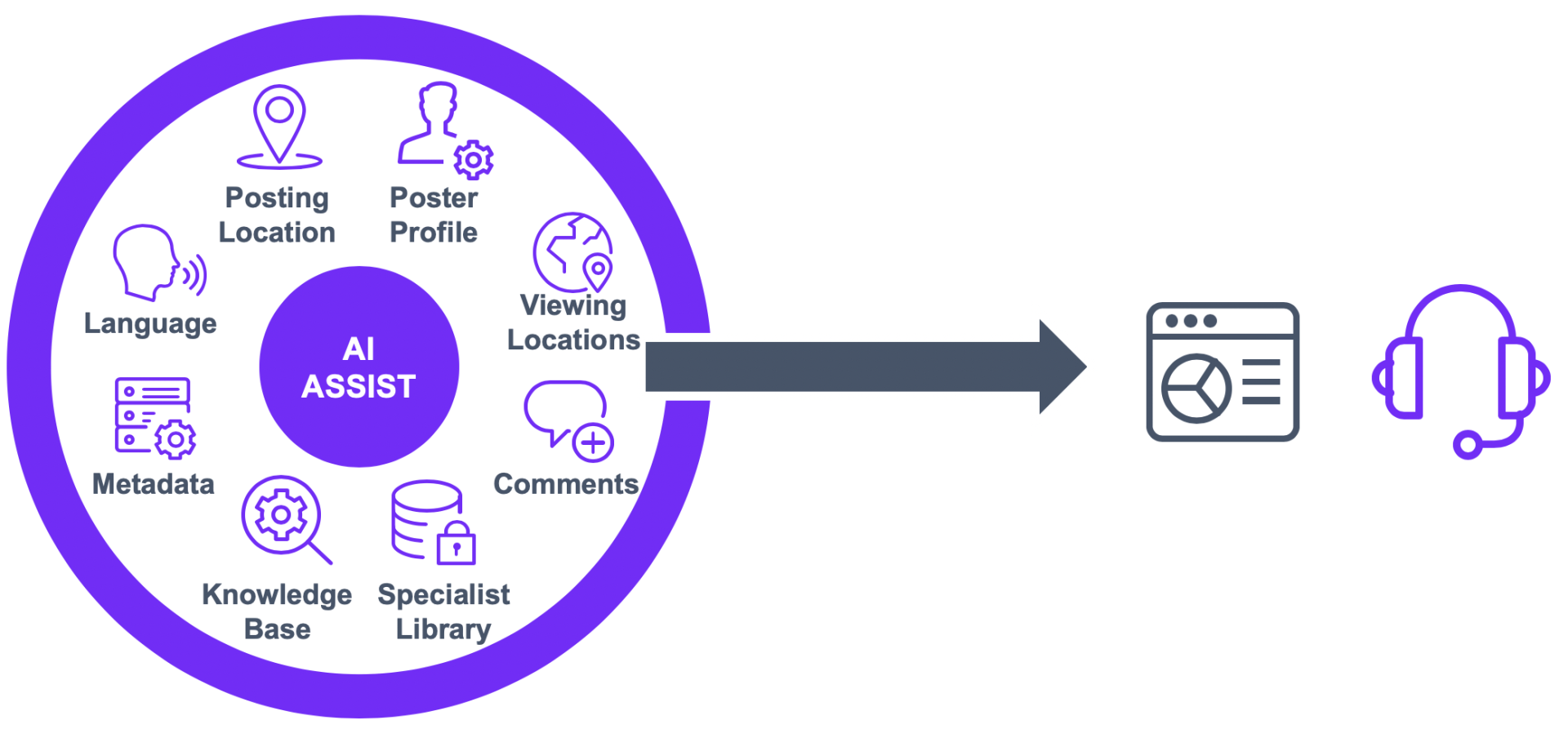

I worked with my client's Content Moderation team that specialized in catching uploaded videos with the intention of violent extremism or terrorist activity. Each team member receives 5-10 videos daily to review and determine an appropriate decision based on the client's policies. They have to pay close attention to the video patterns such as time of day, location, and user accounts that may have been associated with terrorist groups and activity in the past. Working with my team's AI division, we helped brainstorm AI Assistant tools that could be implemented into the team's current portal or bring up discussion with their leadership.

How can we utilize artificial intelligence tools to provide the right information for accurate moderation of flagged videos?

UNDERSTANDING THE USER

USER ANALYSIS

My first step was to setup a kickoff meeting with my team and the moderation team to understand their current tool, how they feel about their job, and hear the collective opinion. Each person had their own opinions and recommendations on what they wanted to see, but the consensus of their current process and tool was summarized into statements:

"There are so many videos that need to be reviewed, but you can see similarities such as people, items, and audio that are being used"

"The amount of information I need to know changes daily and it's hard to keep up with latest trends of certain terrorist groups."

Shortly after, I had set up 1 on 1 sessions/interviews with them as I was told that each person had their own moderation process. I had each person walk me through their process while I observed their steps and the difficulties that came up. Afterwards, they told me about the experience and any pain points that they saw and needed improvement.

DESIGNING THE SOLUTION

PROTOTYPE

The left monitor would be used for completing the main tasks such as viewing the video and its details.

Pain point:

"I watch the video multiple times because something could potentially flash on the screen that I might have missed the first time watching."

Solution:

Using AI tools, we can determine certain imagery, explicit content, or items that usually lead to red flags

After watching the video, the moderators have to determine the if the correct action to take on the user and video, so a quick reference for the decision trees and database is available.

The right monitor would be used for conducting supplementary research and is more responsive to what the AI tool finds in the video. For example, if the user's IP address is shown at a certain location, the AI tool can help determine similar accounts that the team has taken action on in the past.

Pain point:

"Since our team review so many videos every hour, Its hard to catch on certain patterns that come up like a large amount of videos being uploaded from the same location"

Solution:

We wanted to use AI to make it easier for the team to both document their patterns and also keep them up to date on all related videos.

We included tools such as a location map, assocation graph, and an AI assistant that the moderators can ask questions or find additional documentation from online.

After finalizing our design with the client team and with our AI team to make sure everything we had created was feasible, we created a high-fidelity mockup and presented a demonstration highlighting our designed solution that could assist the moderation in their accuracy of judging videos and using their time more efficiently. You can view a recording of the prototype below:

HOW TO IMPROVE

FINAL THOUGHTS

As a Designer, I feel it is important to study how the data and tools we use and how it functions so we can get the best understanding of how we should design. I spent time in the beginning of the project trying to contextualize how AI models work and it allowed me to brainstorm new ways that AI could be used in the platform. Something I plan to do for future work is to study how the development teams might use my designs by involving them in earlier stages of the project so I can hear their thoughts on how it could be designed.